Deepfake technology that uses artificial intelligence to create and modify videos, images, and audio recordings to place individuals in situations they were never in, or put false words in their mouths, has burst onto the scene as the latest technological threat to the democratic political process.

As the 2020 election cycle gets underway, Twitter and Facebook have both recently announced policies for handling synthetic and manipulated media content on their platforms. Reddit and TikTok have also amended community standards to mention manipulated media, in potential preface to larger policy changes. And last night, YouTube outlined efforts to address manipulated media in the context of the 2020 electoral environment.

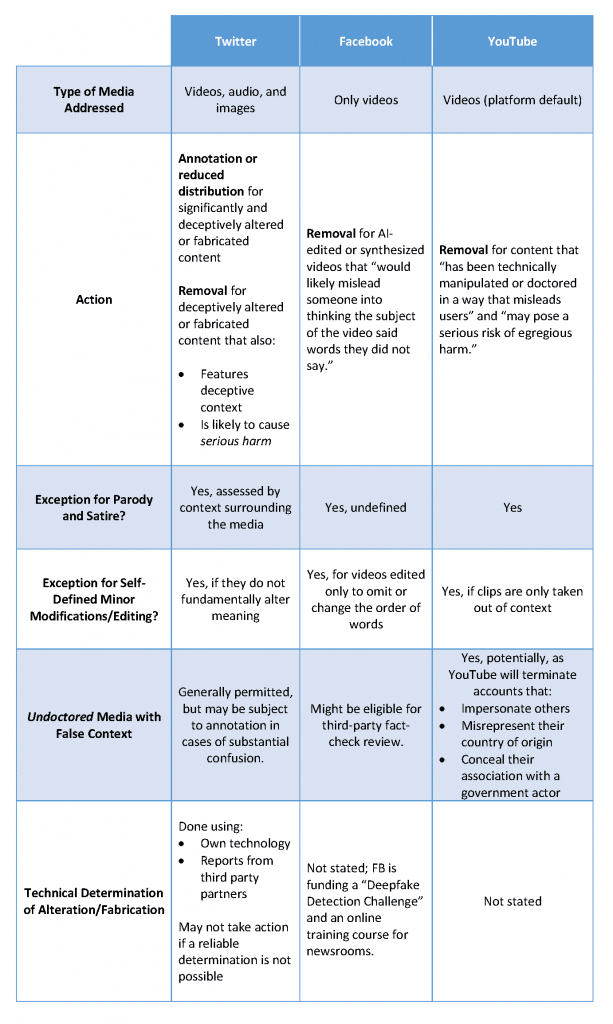

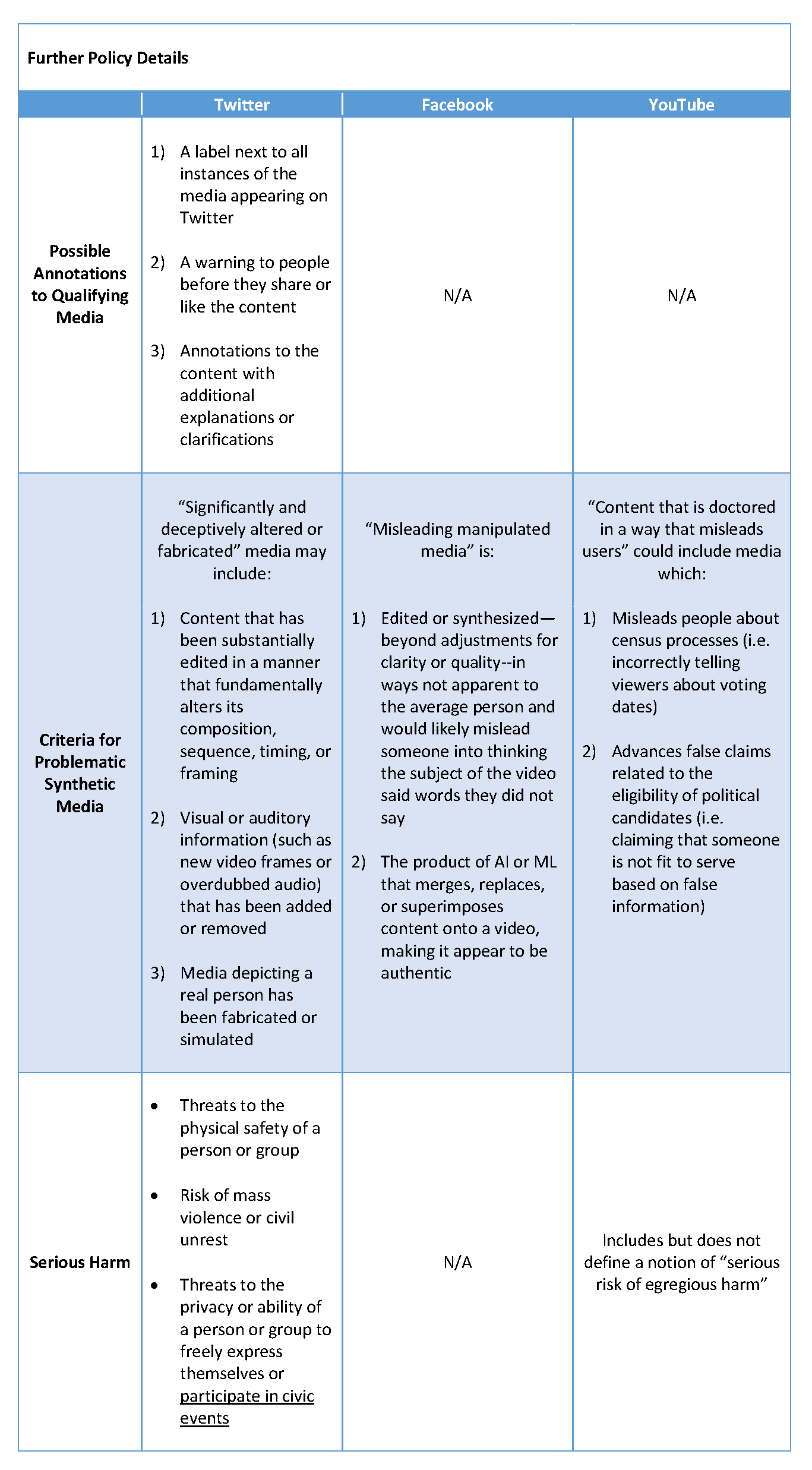

Our side-by-side comparison and analysis of Twitter, Facebook, and YouTube’s policies highlights that Facebook focuses on a narrow, technical type of manipulation, while Twitter’s approach contemplates the broader context and impact of manipulated media. YouTube prioritizes election-related threats, but its policy significantly lacks clarity on the types of content that will be removed and what even constitutes manipulated media. All platforms’ policies would benefit from far greater transparency and accountability around challenging and unenviable decision-making in this space.

Key points of comparison are highlighted in the following chart:

Four key takeaways follow:

First, Twitter assesses whether manipulated media is likely to cause “serious harm” in its criteria for removing content, while Facebook does not. YouTube discusses a notion of “egregious harm” but fails to define it. The types of serious harm Twitter identifies include: a) threats to the physical safety or privacy of a person or group; b) the risk of mass violence or civil unrest; and c) threats to the ability of a person or group to freely express themselves or participate in civic events. These criteria are subjective and would be improved through a more transparent determination process. However, they speak to underlying concerns around the impact of manipulated media on democratic political and social life. In particular, identifying a specific interest in protecting civic participation is noteworthy for upholding the health of democratic processes in response to foreign interference. For example, in 2016, the Russian election interference effort included targeted attempts to suppress voter turnout among African Americans — some of which used manipulated media. The “text-to-vote” campaign ran fake ads to fool Hillary Clinton supporters into thinking they could vote early by texting a five-digit number instead of appearing at the polls.

Second, Twitter outlines an option to annotate some manipulated media, while Facebook and YouTube will simply remove offending content. Namely, Twitter’s policy gives the option of labeling manipulated media, warning users who interact with it, or adding clarifications to it in cases where the media does not have deceptive context and the potential to cause serious harm. While Facebook has said it may use warning labels in specific cases of false information, it has not spelled out a similar, tiered system in policy. And when it comes to deepfakes, removal is the only stated action — it’s all or nothing.

Third, YouTube’s policy is unclear on what constitutes manipulated media and egregious harm. While YouTube specifies manipulated media among the types of content subject to removal, it fails to provide a definition of manipulation. Some examples refer to a video doctored to make a public official appear deceased — a clear example of manipulation. But another example of manipulated media describes the resurfacing of a 10-year-old video depicting a ballot box being stuffed — an example that doesn’t necessarily involve a technical manipulation but takes the video out of context. YouTube also refers on several occasions to a notion of “egregious harm” as a criterion for manipulated video removal but never defines this term.

Fourth, Facebook’s policy is extremely narrow in scope — especially in comparison with Twitter’s. The policy only applies to videos, and among them, only those that have been generated or altered through artificial intelligence or machine learning. So-called “cheapfakes,” videos misleadingly edited without AI and that have targeted U.S. politicians in recent months, are not covered under Facebook’s policy. In addition, the Facebook policy only applies to audio manipulations to videos — and specifically manipulations that portray a subject of a video speaking words that in reality they did not. The policy fails to account for deepfakes that spoof actions rather than words. But synthetic or manipulated actions can be just as misleading; the Jim Acosta video that was sped up to present the reporter as aggressively pushing aside a female aide is a case in point. Finally, Facebook also provides an exception for edits that only “omit or change the order of words.” While any manipulated media policy is likely to struggle with false positives given the scope of the challenge, this exception allows manipulators that misleadingly edit out key context for statements or splice together spoken words in a different order to continue to wreak havoc.

Editor’s note: This is a follow up piece to a January 13 blog post, “Combating the Latest Technological Threat to Democracy: A Comparison of Facebook and Twitter’s Deepfake Policies.”

The views expressed in GMF publications and commentary are the views of the author alone.