The global coronavirus pandemic has also spawned an epidemic of online disinformation, ranging from false home remedies to state-sponsored influence campaigns. In the confusing environment, false medical theories have even found accidental legitimization from corporate actors: last week CVS pharmacy reportedly e-mailed guidelines to employees including the erroneous claim — debunked by experts — that coronavirus could be swept into the stomach and killed by drinking hot water. To stem the growing “infodemic,” social media platforms have moved quickly to quash disinformation on their platforms. Their response represents the strongest attempts to police disinformation to date, though actual results have been mixed.

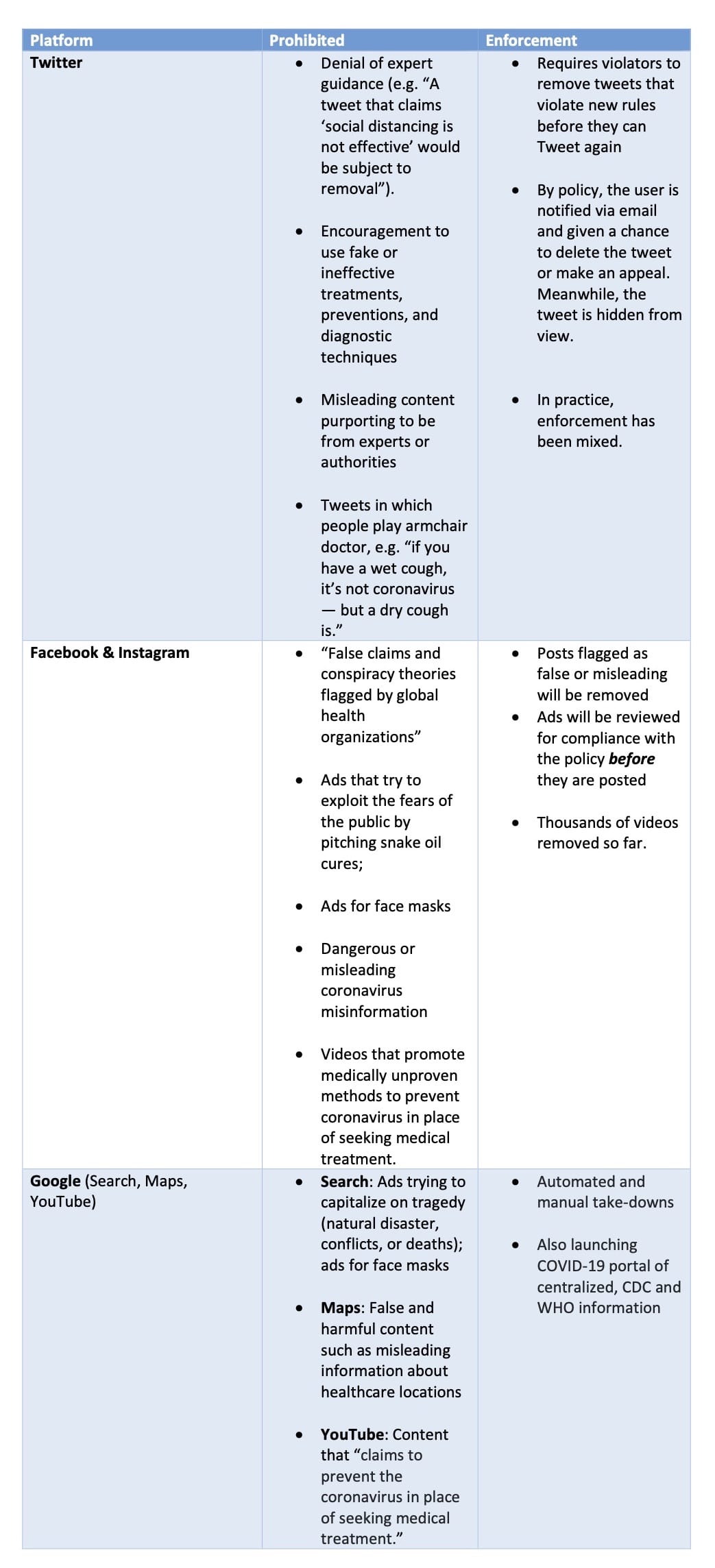

Political will to tackle foreign disinformation online has lacked, and foreign actors are seeking to use the current crisis to their advantage. But after years of lagging action, platforms are responding to false information about the coronavirus pandemic with aggressive policy changes, showing what’s possible when there is a strong will to act. Displaying an unprecedented interest in removing content that threatens public health, Twitter’s new policy prohibits tweets that deny expert guidance, encourage fake or ineffective treatments, or deceptively purport to come from authorities, even excluding exceptions for satire typically included in other policies. Google and Facebook have decided to ban advertisements for medical face masks — given the nation’s short supply and CDC guidance against the healthy wearing them. Amazon has removed one million products on the basis of dubious health claims.

Implementation of these aggressive new policies has been uneven due to a lack of uniform enforcement and greater reliance on automation — both challenges that extend to disinformation beyond COVID-19. Despite the strongly-worded policy to the contrary, ads for face masks continued to surface through Google’s advertising platform and on Facebook. And Twitter refused to remove a tweet from Elon Musk incorrectly calling children “essentially immune” from the virus — even as its policy earlier directly cited an example of claiming the virus did not infect children as a prohibited denial of established facts. It has also left up conspiracy theories from Chinese state officials and media about the origins of the virus itself. On Facebook, a bug in its anti-spam filter designed to remove coronavirus disinformation also removed posts linking to legitimate sources — including The Guardian, Dallas Morning News, and the European Union’s coronavirus guidance.

Where platforms have been more successful is in prioritizing quality information — a crucial element of a counter-disinformation strategy that should remain beyond this crisis. Google search displays authoritative information from mainstream media outlets and prominently offers links to sources such as the Centers for Disease Control (CDC) and World Health Organization (WHO). On Instagram, a pop-up banner linking to information from the WHO greets all users. Google and Facebook are also launching hubs for trusted coronavirus information. Even platforms relatively new to the challenge of disinformation, like Pinterest, now send coronavirus searches to sparse pages with WHO content. WhatsApp, which has been criticized for allowing viral disinformation to flourish under the radar in encrypted and group chats, has taken the initiative to provide special accounts for users to forward suspect information for verification.

Though imperfect, these steps demonstrate many of the novel tools major platforms have at their disposal to fight disinformation. As the crisis evolves, both the platforms and their users should pay attention to the successes and failures of these new policies and tactics — and assess their value in improving the online information environment beyond COVID-19.

The following chart displays new coronavirus disinformation policies enacted by Twitter, Facebook, and Google with notes on their enforcement actions.

The views expressed in GMF publications and commentary are the views of the author alone.