Summary

The Russian government’s active measures campaign during the 2016 U.S. presidential election was a watershed moment in the study of modern information operations. Revelations that the Kremlin had purchased divisive political ads on social media platforms, leaked private campaign e-mails, established social media groups to organize offline protests, and deployed paid government trolls and automated accounts on social media sparked a groundswell of concern over foreign nation states’ exploitation of online information platforms. U.S. media organizations and the American public still largely associate disinformation campaigns with elections, viewing information operations through the prism of the 2016 campaign and, more recently, the 2018 midterms. Yet, analysis of Russia’s ongoing social media operations reveals that efforts to interfere in elections are but one tactical objective in a long-term strategy to increase polarization and destabilize society, and to undermine faith in democratic institutions. In order to understand the full scale and scope of the Kremlin’s efforts, it is therefore critical to view Russia’s subversive information activities as a continuous, relentless assault, rather than as a series of targeted, event-specific campaigns.

This report examines several million tweets from known and suspected Russian-linked Twitter accounts in an effort to expose the methods and messages used to engage and influence American audiences on social media. Data is primarily drawn from Hamilton 68, a project from the Alliance for Securing Democracy that has tracked Russian-linked Twitter accounts since August 2017. Additional data is sourced from Twitter’s release of more than 3,800 Internet Research Agency (IRA) accounts. Coupled with analysis of criminal complaints and indictments from the Department of Justice, this report builds a case that Russia’s election-specific interference efforts are secondary to efforts to promote Russia’s geopolitical interests, export its illiberal worldview, and weaken the United States by exacerbating existing social and political divisions. By examining Russia’s information operations on Twitter from both an operational and thematic perspective, this report also highlights the tactics, techniques, and narratives used to influence Americans online. Through this analysis, we illuminate the ongoing challenges of protecting the free exchange of information from foreign efforts to manipulate public debates.

Introduction

On August 2, 2017, at least 11 different Twitter accounts posing as Americans — but operated by Russian trolls working for the Internet Research Agency (IRA) in St. Petersburg — tweeted messages urging the dismissal of then-U.S. National Security Advisor H.R. McMaster.1 Far from the typical partisan banter, this was an inside attack — one orchestrated by Russian trolls masquerading as conservative Americans who purported to be loyal to President Trump. Among them was @TEN_GOP, an infamous IRA troll account claiming to be the “unofficial Twitter of Tennessee Republicans,” which blasted McMaster in a critical post and urged its over 140,000 followers2 to retweet “if you think McMaster needs to go.”3

Figure 1: Tweet recreated for visual purposes: Retweet and likes are not accurate depictions of engagement

At the time, key details of the Kremlin’s online active measures campaign — including the purchase of divisive ads, the creation of fictitious Facebook groups, the coordinated release of hacked information, and the promotion of offline protests — had yet to be revealed or fully understood. It would be months before the first Senate hearing on Russian interference on social media4 and a half-year before Special Counsel Robert Mueller’s indictment against the Internet Research Agency.5 Despite the unanimous assessment of the U.S. intelligence community that Russia interfered in the 2016 U.S. presidential election,6 the American public remained vulnerable, in no small part due to the tech companies’ lack of candor about abuses on their respective platforms and inaction by legislators to hold them accountable.

In the absence of a robust government or private sector response, the Alliance for Securing Democracy (ASD) unveiled Hamilton 68,7 a dashboard displaying the near-real-time output of roughly 600 Twitter accounts connected to Kremlin influence operations in the United States.8 Launched on the same day as the IRA attack on McMaster, the dashboard was our attempt to create an early warning mechanism that could flag information operations — like Russian-linked efforts to amplify the #FireMcMaster hashtag campaign — at their onset, thereby reducing their effectiveness.9 The objective was not to unmask, dox, or shutdown accounts, but rather to improve our collective resilience by exposing the tactics and techniques used by the Kremlin and its proxies to manipulate information on social media. The dashboard was also an attempt to move the conversation past myopic and often partisan re-litigations of the 2016 U.S. presidential election by illuminating the ongoing nature of Russian-linked influence campaigns.

State of Play

Today, we have a significantly better understanding of how the Kremlin uses computational tools for the purpose of insinuation and influence. The excellent work of disinformation researchers, investigative reporters, and various law enforcement agencies, coupled with improved transparency from the platforms, has contributed to a greater public understanding of the threat. Yet, misconceptions persist. Despite consistent coverage of digital disinformation issues since 2016, the current debate still fails to capture the full scale and scope of Russia’s online interference activities.

Part of the problem is framing. Much of the conversation around the Russian government’s online information operations has focused on its hand in the creation or promotion of demonstrably false information. The tendency to associate information operations with falsehoods or wild conspiracy theories misses an important point: the vast majority of content promoted by Russian-linked networks is not, strictly speaking, “fake news.” Instead, it is a mixture of half-truths and selected truths, often filtered through a deeply cynical and conspiratorial worldview. For this reason, the term “information manipulation” — a phrase popularized in the excellent French report by the same name10 — is a more accurate description of Russian efforts to shape the information space, as it is not limited by the definitional constraints of terms like disinformation or propaganda. It also encapsulates the uniquely “social” elements of online information operations; the terms of yesteryear, it turns out, are ill-equipped to define a phenomenon that now includes cat videos and viral memes.

But another problem is the natural tendency to report on new occurrences of interference rather than the steady, more subtle efforts to shape global narratives. Rapid reaction reporting has value (as noted, Hamilton is meant to be an early warning system), but the fixation on individual episodes of interference can be problematic. By defining information operations as a series of isolated events rather than as a continuous, persistent campaign, there is a propensity to lose the forest for the trees.

This report, in part, is our effort to bring the forest back into focus. By zooming out from the daily churn of trending topics, we hope to elucidate the Kremlin’s strategic objectives and the methods used to achieve those objectives. Although the analysis in this report is based primarily on data collected by Hamilton 68, this is not merely a summation of our research. As we have noted, Hamilton tracks “but one sample in a wide-ranging population of Kremlin-oriented accounts that pursue audience infiltration and manipulation in many countries, regions, and languages via several social media platforms.”11 As such, this report incorporates relevant third party academic and civil society research, investigative reports, government inquires, criminal complaints, and revelations from the social media platforms themselves. In particular, we make extensive use of Twitter’s release of data from more than 3,800 IRA-linked accounts,12 as well as the recent criminal complaint against Elena Khusyaynova, the IRA accountant accused of conspiracy to defraud the United States.13

At the same time, this report is hardly comprehensive. The data within is largely sourced from Twitter, meaning that some of our findings are likely less applicable to other social media platforms. The scope of this paper is also limited to the Kremlin’s information operations on social media, and does not include other elements of Moscow’s messaging apparatus, including its traditional media channels like RT, Sputnik, and other state-controlled outlets. It is therefore critical to stress that Russia’s information operations on social media do not exist in a vacuum; they are integrated into a wider strategy that employs a range of messengers and tools, including offensive cyber operations.14

Despite these limitations, it is our hope that this report will paint a clearer picture of the Kremlin’s social media operations by highlighting the methods and messengers used to influence Americans online:

- First, we highlight on the narratives used to influence American public opinion and amplify societal divisions. What are the common characteristics of the themes promoted to American audiences online? How is apolitical “social” content used to attract followers? What is the interplay between domestic content of interest to U.S. audiences and geopolitical content of interest to the Kremlin?

- Second, we analyze the fundamental differences between computational propaganda and previous propaganda iterations. What structural elements of digital platforms make the propaganda of today different from its offline predecessors?

- Third, we highlight the operational elements of social media information operations. Looking at both individual account behavior and that of coordinated networks, we explore the methods employed by overt and covert accounts to infiltrate audiences and disseminate content on social media.

- Fourth, we examine the key messaging moments of the past year. Reviewing data from Hamilton 68 and known IRA accounts, we highlight activity “spikes” over the past year in order to identify Moscow’s messaging priorities. We then provide three case studies to analyze the tactics and techniques used to shape specific narratives.

- Finally, we address the issue of impact. This paper does not attempt to answer empirical questions related to influence. It does suggest, however, indicators for determining when Russian efforts might influence broader, organic conversations online.

Manipulated Narratives

Russian-linked disinformation accounts engage in a near-constant dialogue with western audiences, mixing friendly banter and partisan “hot takes,” with old-fashioned geopolitical propaganda. In isolation, the narratives promoted to western audiences often appear to lack a strategic objective; in aggregate, however, it is far easier to extract the signal from the noise.

On Hamilton 68, we have noted a consistent blend of topics — from natural disasters to national tragedies, pop culture to Russian military operations. Broadly speaking, though, content promoted by known IRA trolls and Russian-linked accounts falls into one of three categories:

- Social and political topics of interest to American audiences

- Geopolitical topics of interest to the Kremlin

- Apolitical topics used to attract and engage followers

Depending on the type of account, the ratio of posts containing domestic, geopolitical, or innocuous narratives differs drastically. Certain accounts largely focus on geopolitical issues; others primarily target U.S. social and political topics. Yet, there is evidence that most accounts mix messages and themes. This is true even of RT and Sputnik, which frequently insert clickbait content into an otherwise predictable pattern of pro-Russian, anti-Western reporting.

But this is a particular feature of Russian accounts posing as Americans online. IRA troll data released by Twitter shows accounts sharing Halloween costume ideas, movie suggestions, celebrity gossip, and “family-friendly summer recipes.”15Similarly, accounts monitored on Hamilton 68 often hijack innocuous, trending hashtags — for example, #wednesdaywisdom or #mondaymotivation — to boost the visibility of their content. The use of “social” content serves two purposes: it adds a layer of authenticity to accounts and it can potentially attract new followers, allowing for audience infiltration.

With rare exceptions, though, the daily focus of sock-puppet troll accounts is on the most divisive social or political topic in a given news cycle. Those topics tend to be transient, meaning that interest ebbs and flows with the rhythms of broader conversations on social media. The constants are the geopolitical topics. Rarely are they the most discussed topic on a given day, but over time, the geopolitical interests of the Kremlin — namely, the wars in Syria and Ukraine, but also Russian reputational issues (e.g., Olympic doping and the poisoning of the Sergei and Yulia Skripal) and efforts to divide transatlantic allies (e.g., the promotion of anti-NATO narratives and the amplification of Islamic terrorism threats in Europe)16 — emerge as clear messaging priorities. On Hamilton 68, for example, both Ukraine and Syria are among the top five most-discussed topics over the past year, despite rarely appearing as the most prominent topic on any individual day. This is an oft-overlooked point; there is a strategic, geopolitical component buried beneath the more obvious efforts to “sow discord” in the West.

Trust the Messenger

By adopting genuine, American positions (albeit hyper-partisan ones) on both the left and the right of the political spectrum, Russian-linked accounts gain credibility within certain American social networks. Credibility is critical to an audience’s receptivity to a message. In this context, however, credibility is not an objective term; it is a social construct determined by a group’s perception of the truth. Those perceptions are intimately tied to a given group’s worldviews, meaning that audiences on the political fringes are more likely to view likeminded accounts as trustworthy, whether or not those accounts have a history of spreading mistruths. In a RAND paper on Russia’s propaganda model, Christopher Paul and Miriam Matthews write, “If a propaganda channel is (or purports to be) from a group the recipient identifies with, it is more likely to be persuasive.”17 In this case, the propaganda channel is the account itself.

American Narratives, Russian Goals

On American issues, Russian-linked accounts do not need to be persuasive. Trolls target audiences with messages tailored to their preferences, so the content shared with those audiences merely solidifies preconceived beliefs. If anything, information operations harden opinions, but they do not create them.

The wedge issues used to exacerbate existing fissures in American society have been well documented,18 but the Khusyaynova criminal complaint provides a useful refresher:

… members of the Conspiracy used social media and other internet platforms to inflame passions on a wide variety of topics, including immigration, gun control and the Second Amendment, the Confederate flag, race relations, LGBT issues, the Women’s March, and the NFL anthem debate. Members of the Conspiracy took advantage of specific events in the United States to anchor their themes, including the shootings of church members in Charleston, South Carolina, and concert attendees in Las Vegas, Nevada; the Charlottesville “Unite the Right” rally and associated violence; police shootings of African-American men; as well as the personnel and policy decisions of the current U.S. administration.19

The outcome of each of these debates is largely irrelevant to the Kremlin. The Russian government has no stake in gun control or NFL protests — they do have a stake in amplifying the most corrosive voices within those debates. By amplifying extreme, partisan positions, they can further poison public discourse, while simultaneously endearing themselves to “like-minded” users.

Besides pushing socially disruptive narratives, Russian-linked accounts have also engaged in a protracted campaign to undermine U.S. institutions and democratic processes. Hamilton 68 has noted a consistent effort to discredit the Mueller investigation and the Department of Justice. “Deep State” conspiracy theories run rampant, as do attacks against more moderate forces in the administration and Congress, including the late John McCain.20 Last December, for example, Russian-linked accounts cast doubt on the Senator’s declining health, promoting a True Pundit article with the headline, “As the Trump Dossier Scandal Grows and Implicates Him, McCain checks into Hospital.”21 The Khusyaynova criminal complaint provides further evidence — a “tasking specific” directed IRA trolls to “brand McCain as an old geezer who has lost it and who long ago belonged in a home for the elderly.”22

The validity of elections is also a target. Russian-linked accounts have promoted content that highlights voter suppression efforts or that amplifies purported instances of voter fraud. After the Roy Moore/Doug Jones special election in Alabama, one of the top URLs promoted by accounts monitored on Hamilton 68 was a race-baiting Patriot Post article with the headline, “BREAKING: Busload Of Blacks From 3 States Drove To Alabama To Vote Illegally.”23 In an ironic twist, IRA troll accounts have also stoked fears over the possibility of Russian meddling in future elections, including @fighttoresist, a left-leaning troll that tweeted in February: “FBI chief: Trump hasn’t directed me to stop Russian meddling in midterms[.] We know why Trump doesn’t want to act against Russia.”24

It is important to note that Russian-linked accounts did not create any of the aforementioned social or political cleavages. Instead, their role is to support or undermine positions within a debate, often simultaneously. For instance, during a contentious political debate over the public release of the so-called FISA memo,25 trolls staked out positions on both sides of the debate:

On January 29, @barbarafortrump — unsurprisingly, a “right” troll — wrote, “FBI Deputy Director McCabe resigns because he knows what’s in that memo. Now we must press them harder to #ReleaseTheMemo Transparency is essential and no president were more transparent than @realDonaldTrump.”26

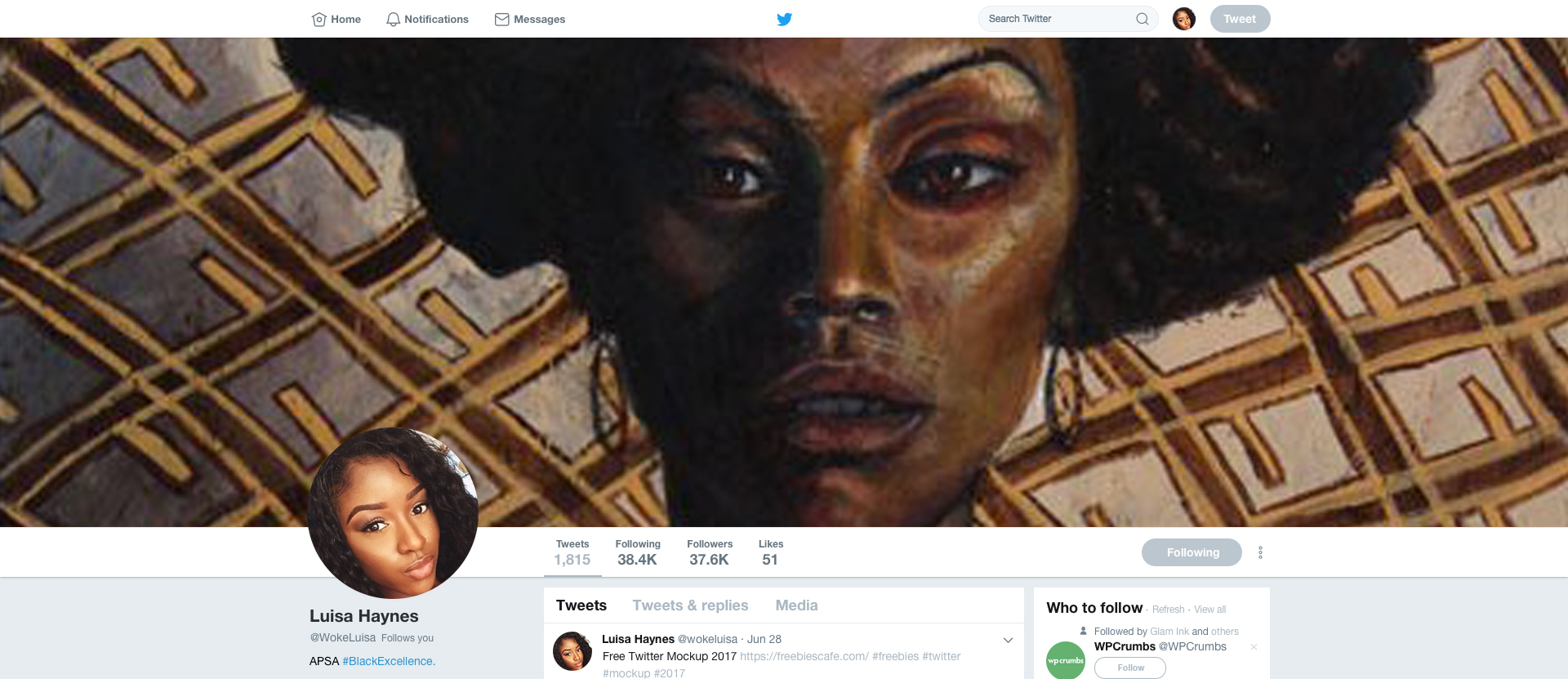

A few days later, @wokeluisa, a “left” troll masquerading as a Black Lives Matter activist, tweeted, “Congrats, Republicans. You just released a memo that confirms the FBI didn’t get FISA warrants based off the Dossier. Debunking your own conspiracy theory with your own document. Meta. #RemoveNunes.”27

It is clear that Russian-linked accounts are merely narrative scavengers, circling the partisan corners of the internet for new talking points. Genuine American voices provide the narratives; Russian-linked accounts provide the megaphone.

But Americans also provide the content, at least on domestic issues. Details provided in the criminal complaint against Elena Khusyanova reveal the extent to which IRA trolls simply copy and paste stories from real, American outlets — credible and otherwise:

Members of the Conspiracy also developed strategies and guidance to target audiences with conservative and liberal viewpoints, as well as particular points of view. For example, a member of the Conspiracy advised in or around October 2017 that “if you write posts in liberal groups, … you must not use Breitbart titles. On the contrary, if you write posts in a conservative group, do not use Washington Post or BuzzFeed’s titles.”28

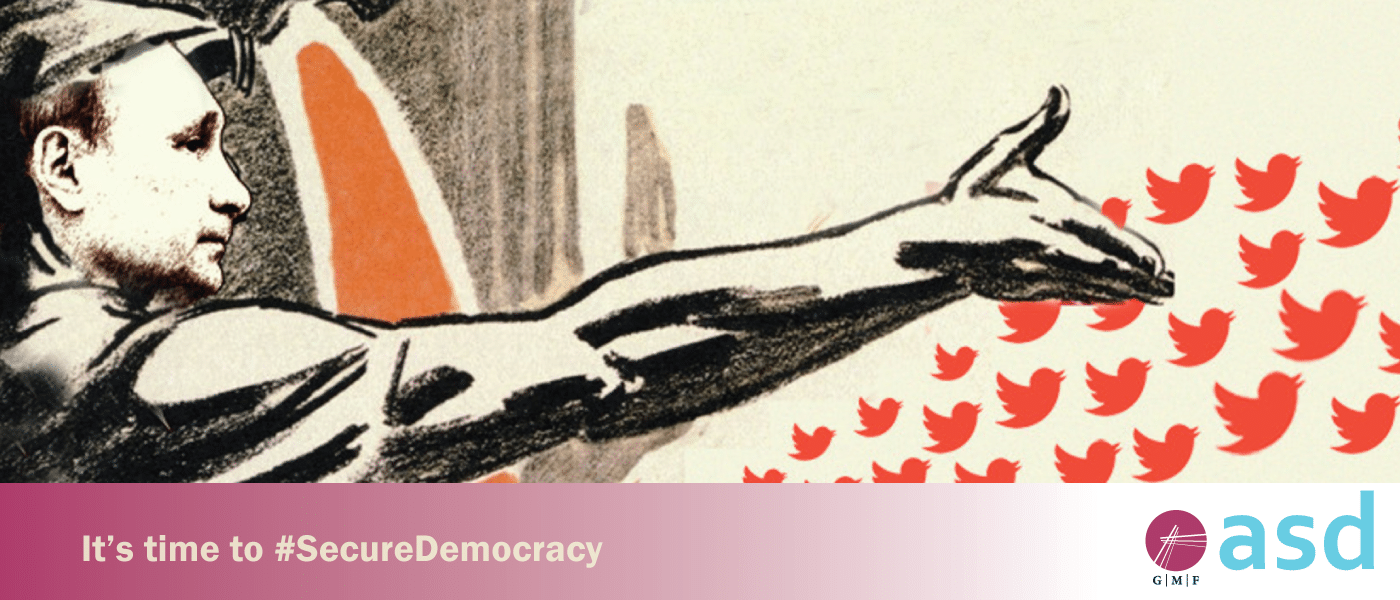

This strategy is reflected in the sites linked-to by accounts monitored on Hamilton 68. Hashtags and bios often reveal the audience they are targeting, but the URLs and domains reveal what they want the audience to believe. When the conversation is about an American topic, Russian-linked accounts pull headlines from or link-to American sites. When looking at relevant metrics from tweets that contain keywords like “Trump” or the “NFL,” the most common domains are almost exclusively American (See Figure 2). Given that most accounts within our network target conservative supporters of the president, right-leaning or hard-right domains are favored.

Figure 2 – The domains most commonly linked-to in tweets containing the keywords “Trump” or “NFL.” Data from Hamilton 68.

When tweeting about geopolitical topics of interest to Russia, however, Russian-linked accounts shift to pro-Kremlin messages and messengers. As depicted in figure 3, Russian-linked accounts monitored on the dashboard almost exclusively link to Kremlin-funded sites or those with ideological affinities when tweeting about topics related to Ukraine and Syria.

Figure 3 – The domains most commonly linked-to in tweets containing the keywords “Ukraine” or “Syria.” Data from Hamilton 68.

Unsurprisingly, RT.com is the most popular domain associated with tweets about both Syria and Ukraine. RT’s reach is likely even greater if you factor in YouTube, where RT’s channel is a go-to source for geopolitical videos. Perhaps more interesting, however, are the sites not directly funded by the Kremlin. In tweets about Ukraine, for example, Stalkerzone.org — a blog run by Oleg Tsarov, a pro-Russian separatist in eastern Ukraine — trails only RT as a source of information. The 10th most popular site is DNInews.com (Donbass International News Agency). According to SimilarWeb statistics, Stalkerzone and DNInews are, respectively, the 651,637 and 7,148,079 most visited websites worldwide.29 Put simply, American audiences are not likely to find or visit these sites on their own.

Similarly, tweets about Syria direct users to a steady diet of pro-Assad and/or pro-Kremlin pages. Almasdarnews.com, the second-most linked-to domain, is a website focusing on the Middle East that Newsweek describes as “pro [Syrian] government.”30 Domains run by known Kremlin sympathizers or those that attack Kremlin critics round out the remainder of the top 10. While some of these sites — including, MintPress and ZeroHedge — are based in the United States, all of them report on geopolitical events from a distinctly anti-western perspective.

The use of relatively unknown, foreign, or low-trafficked sites to promote the Russian government’s geopolitical agenda seems to diverge from the strategy of using known, trusted messengers. However, if we consider the social media accounts themselves to be the messengers, the sources of information becomes less relevant. If an account has built up its in-group credentials by linking to sites favored by the target audience on American issues, the insertion of less-known or trusted sources of information on geopolitical issues is likely less problematic.

Folding pro-Kremlin messages into daily chatter about American issues also makes the propagandistic element of the Russian government’s information operations less apparent. Take the tweeting habits of @wokeluisa, the aforementioned IRA persona known for tweeting about police brutality and social justice issues. Although the vast majority of “her” tweets focused on American issues, “she” also weighed in on the U.S. decision to launch retaliatory strikes in Syria on April 7, 2017, tweeting: “The U.S. bombing Syria to ‘save’ children is just another excuse for war;”31 and “Trump’s presidency in a nutshell: U.S. bombed Syria to tell Syria not to bomb Syria.”32 The opinions are clearly in-line with Moscow’s interests, but the messages are crafted to resonate with left-leaning American audiences.

Russian Information Operations on Social Media: Old Tactics, New Techniques

Attempts to aggravate conflicts in the West are hardly new; Soviet “active measures” sought to weaken the United States by sowing internal discord and discrediting America abroad. But while the themes may feel familiar, the scale, scope, and efficiency of today’s information operations are unrecognizable from their predecessors.

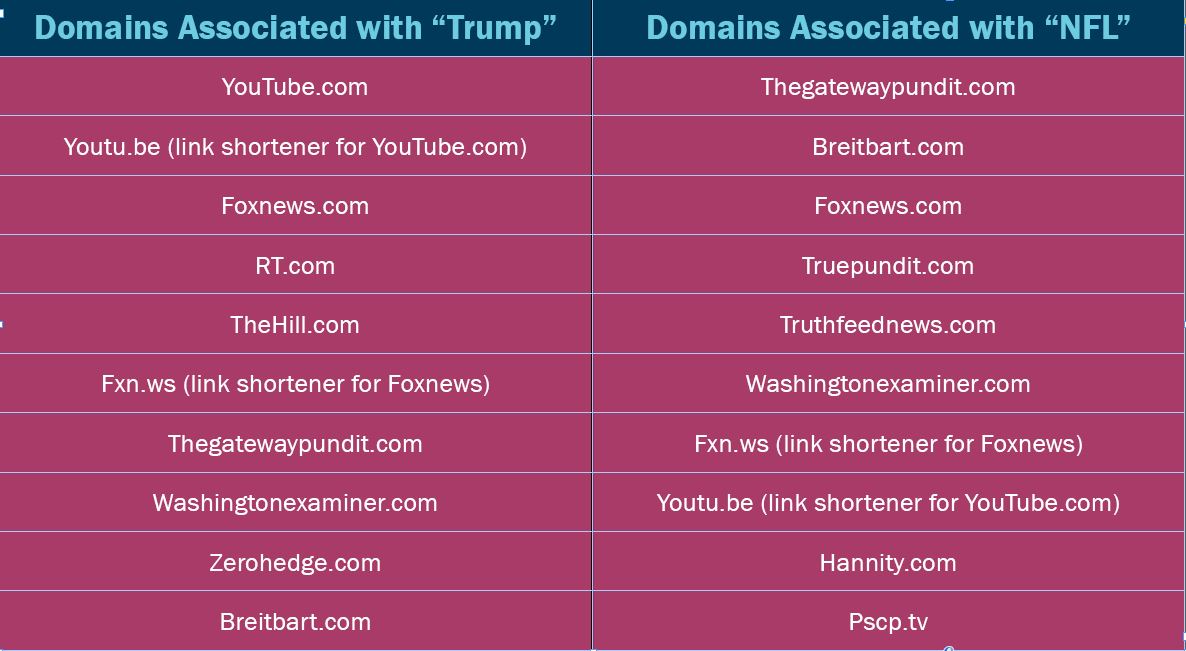

Compare two similar disinformation operations: one from the pre-social media era and one from today. In 1980, People’s World, a U.S. Communist Party paper, published a report alleging that the U.S. government was researching a biological weapon that could selectively target particular ethnic groups or races. With help from Soviet disinformation agents, variations of the so-called “ethnic weapon” story bounced around sympathetic third world publications throughout the early 1980s. In 1984, the rumor resurfaced in TASS (the official Kremlin mouthpiece), which accused the United States of working with Israel and South Africa to test the viruses “on Africans in prisons of the apartheid state and on Arab prisoners in Israeli jails.”33 Over the next several years, Soviet publications repeated the rumor on at least twelve different occasions, adding new plot twists to inject oxygen into the lungs of the story. In 1987, Dan Rather referenced the rumor on the CBS Evening News, thus completing the information laundering cycle.34

Compare that to the recent Russian claim that the U.S. is developing “drones filled with toxic mosquitos” at a clandestine biological weapons lab in Georgia.35 Although Kremlin-fueled conspiracy theories about the Georgian lab’s true purpose have swirled since its opening in 2013, the most recent claims surfaced on a low-trafficked Bulgarian blog — the modern equivalent of People’s World — in mid-September 2018. The story spread through Twitter (with the assistance of Russian-linked accounts),36 and within days appeared on RT, Sputnik, and a wide range of pro-Kremlin and conspiratorial sites. By early October, the story had jumped from the less-traversed corners of the internet into the Associated Press and other major western publications. A narrative that once took years and significant resources to place, layer, and integrate into western conversations, had spread across digital platforms in a matter of weeks. And this was a relatively slow contagion; online rumors or leaked information can now spread in a matter of days — if not hours.

Figure 4 – A comparative timeline of the spread of anti-American rumors before and after the advent of social media.

Speed is not the only benefit. Digital platforms provide several other advantages for modern information operations, including:

- Low-cost publishing: The resources needed to create an extensive network of carve-outs, cutouts, and proxy sites are minimal. For a fraction of the cost of running a single sympathetic newspaper, the Kremlin and its supporters can create hundreds of professional-looking and seemingly credible “news” sites.

- Elimination of “gatekeepers”: The horizontal nature of the internet allows information to be published without editorial oversight. The blurring of lines between professional journalists and citizen journalists means that anyone can be a source of information.

- Anonymity: Social media provides ideal conditions for the dissemination of black and gray propaganda. The ability to post anonymously or pseudonymously allows purveyors of false and manipulated information to mask both the origin of the information and their role in the spread of that information.

- Precision Targeting: Audience segmentation and consolidation online makes it easier to tailor specific messages for specific populations. The same behavioral data that fuels the digital advertising ecosystem can be used to study and target foreign audiences.

- Automated Amplification: The use of bots allows a relatively small number of operators to amplify exponentially messages across multiple platforms and channels. Bot networks and trolls-for-hire can also “manufacture consensus” in order to make a candidate or policy appear more widely supported — or vice versa — than they actually are.

None of these modern features is inherently nefarious, but in the hands of authoritarian actors with the resources and desire to exploit them, they can be — and have been — used for more malign purposes.

Operational Features: The View From The Ground

The disinformation “touchpoint” for most social media users is at the individual account level. This is especially true on Twitter, where content is most readily discoverable to those that follow a specific account. It is therefore critical that Russian-linked accounts — whether legitimate or illegitimate, automated or human operated — attract audiences of real users. At the granular level, information operations resemble people-to-people exchanges, albeit with a perverse twist.

This section will primarily focus on the strategies used by covert accounts to attract and engage American audiences online; however, it is important to stress that Russia’s information operations run through both covert and overt accounts. These accounts include government trolls, bots, “patriotic” Russians,37 sympathetic foreigners, diplomats, GRU hackers, and government officials, to name but a few. The diversity of accounts is important to stress, as there has been a tendency to mislabel all Russian-linked disinformation accounts as “Russian bots.” Indeed, the term “Russian-linked” is also an imperfect description, as accounts that regularly promote Russian narratives do not necessarily have links, per se, to Russia itself. Our use of the term in this and other publications is simply our best attempt to describe the constellation of accounts in Russian disinformation networks.

Despite the fact that these accounts often amplify the same narratives and, at times, each other, they are quite different. Some toe the line of being legitimate public diplomacy efforts; others egregiously violate international norms — not to mention Twitter’s terms of service. It is therefore useful to delineate account types. For lack of a better option, we repurpose traditional propaganda typology:

- White propaganda accounts (overt): Accounts that are overtly connected to the Russian government. This includes official government accounts and English-language accounts run by Russian embassies (most notably, @RussianEmbassy and @mfa_Russia), as well as Kremlin-funded and controlled media, namely RT and Sputnik.

- Black propaganda accounts (covert): Accounts that use sock puppets or stolen identities to mask the identity of the user. Typically, these accounts adopt American personas to lend credibility and a patina of authenticity to their opinions. IRA troll and bot accounts are the most visible examples, but accounts operated by Russian military intelligence — for example, Guccifer 2.0 and DCLeaks — and fictitious “local” news accounts (@ChicagoDailyNew) also fit in this category.38

- Gray propaganda accounts: Accounts that fall between black and white propaganda. These accounts differ from black propaganda accounts in that they do not use deception to conceal their identities; however, these accounts may obfuscate the affiliations or affinities of the user. This category includes accounts connected to individuals as well as those connected to pro-Kremlin outlets, including @TheDuran_com and @FortRussNews.

Although each account serves a role in the propagation of manipulated information, of most concern is the use of black propaganda accounts. These accounts use deception to interfere in the social and political debates of target populations, with the intent to corrode those debates through the insertion or amplification of divisive narratives. Congressional hearings and investigative reports have highlighted the content spread by these trolls (a subject we covered earlier in this report), but less focus has been paid to the techniques used by covert operators to gain credibility and legitimacy with American audiences.

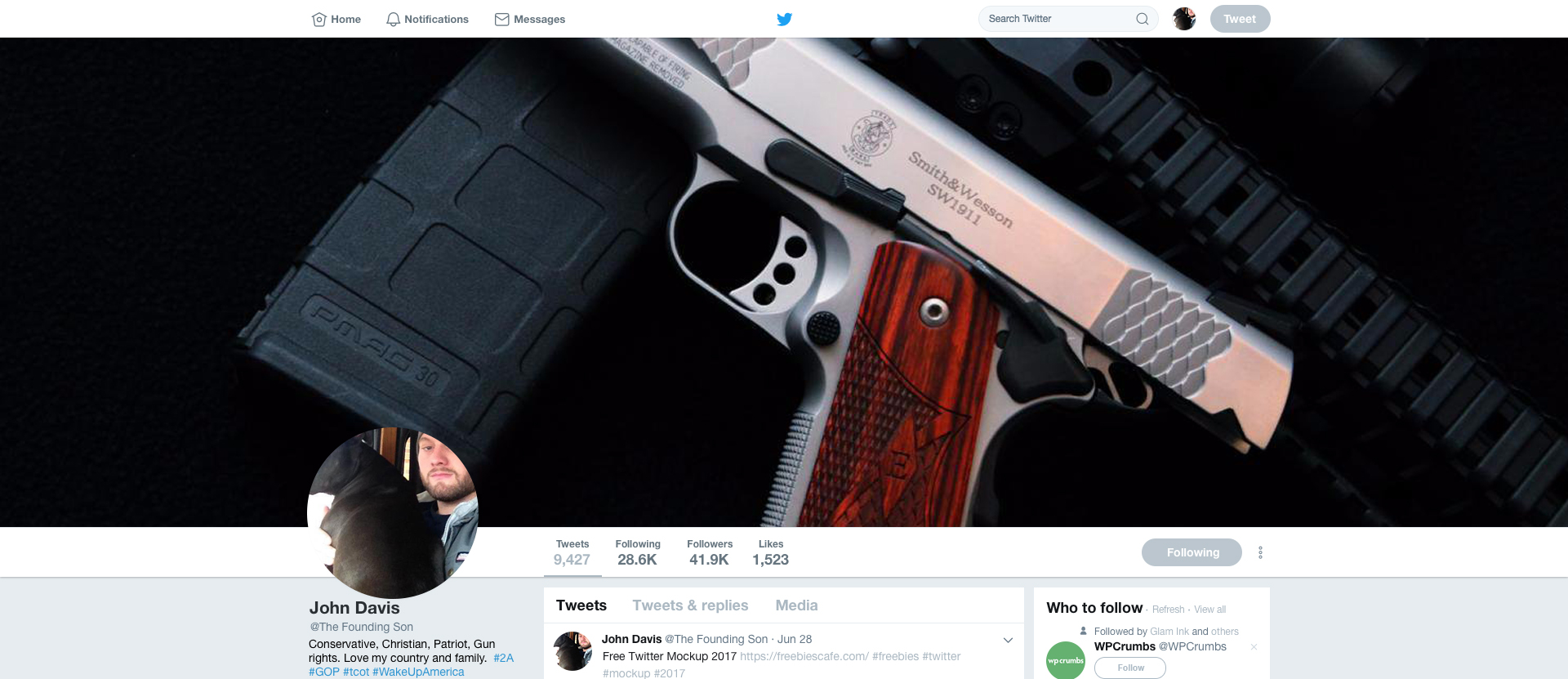

As detailed in the criminal complaint against Khusyaynova, IRA employees develop “strategies and guidance to target audiences with conservative and liberal viewpoints, as well as particular social groups.” One such strategy is the use of visual and descriptive character traits meant to resonate with particular audiences. Like authors creating backstories for fictional characters, IRA trolls use images and biographical descriptions to lend online personas both a veneer of authenticity and certain in-group credentials. These “physical” characteristics provide the digital equivalent of a first impression, and are part-and-parcel with the effort to attract and influence American audiences. Replicating the audience on social media plays to the natural implicit bias of users seeking out information from sources that look like and talk like they do.

While adopted personas vary depending on the targeted audience, a review of suspected troll accounts on Hamilton 68 and the known IRA troll accounts released by Twitter reveal commonalities, regardless of the ideological target. It is important to note, though, that the patterns detailed below are not meant as investigative clues or evidence of subterfuge. Many legitimate accounts contain similar traits, meaning that any effort to unmask accounts based on the details below would be unfounded, if not dangerous. The purpose of identifying these traits is to gain greater insight into the methods used to mimic real Americans online, not to dox those with pro-Kremlin opinions. Common biographical traits include, but are not limited to, the following:

- Mention of nationality: It is common for authentic accounts to highlight their hometowns or nationalities; however, it is ubiquitous in troll account profiles. In fact, the most common term (by a wide margin) used in biographies of the suspended troll accounts released by Twitter is “USA.” “United” and “States” are the third and fourth most common terms, followed by a long list of American cities, led by New York and Atlanta. Anecdotally, this has long been a feature of troll activity on message boards, where suspected foreign actors often qualify statements with variations of: “I am an American who believes (insert opinion).” Genuine American social media users may boast of their nationality, but it is not a requirement to do so.

- Political preferences: It has been well documented that IRA trolls play both sides of political and social debates. It is important to stress, however, that individual accounts are ideologically consistent, perhaps even more so than partisan Americans. The adherence to a purported ideology is so consistent that Clemson University researchers Darren Linvill and Patrick Warren categorized IRA-associated accounts as “left” or “right” trolls.39 Those classifications were based on analysis of the content promoted by analyzed accounts, but the clear ideological bent of IRA trolls is also reflected in their biographical descriptions, banner images, and profile pictures. Many include explicit references to political affiliations — for example, “conservative” or “Democrat” — or implicit clues, such as banner photos of President Trump, or, conversely, biographical hashtags like #resist or #blacklivesmatter.

- Group affiliations: Beyond clearly stated political preferences, IRA trolls use social or religious affiliations to attract target audiences. On the right, covert accounts often highlight — through images or descriptive terms — their Christian faith or a connection to the U.S. military. On the left, accounts are more likely to mention their race and/or a connection to a social justice movement.

- Humanizing features: IRA trolls understand the need to create accounts that are likeable and relatable. Terms like “mother,” “animal lover,” and “retired” are common, particularly with right troll accounts. One IRA persona even declared himself to be the “proud husband of @_SherylGilbert” — an account, as it turns out, the IRA also operated (see right troll example 2 below).

- Excessive hashtags: To make accounts more visible in searches, IRA trolls list popular hashtags in their bios that are likely to resonate with target audiences. Again, this is a common feature of many politically engaged accounts — #MAGA and #Resist, among others, are widely used. But IRA accounts often use three or more hashtags in the bios, often updating them to co-opt new, trending hashtags.

- Requests for followers: IRA accounts shamelessly pander for followers, with many including “follow me” in their biographies. Some even offer a degree of reciprocity. For example, an account describing herself as a “super lefty-liberal feminist” promised to “follow back, unless you’re weird.”40

Besides the features mentioned above, IRA sock-puppet accounts are also noteworthy for what they do not include:

- Nuance: The diversity of hobbies and interests that one might expect from genuine American accounts is often missing. Sock-puppet troll accounts are essentially stereotypical stock characters: the conservative vet, the liberal activist, the MAGA firebrand. There is both no need and no reward for creativity or depth.

- Specific details: Besides names and hometowns, these accounts, for obvious reasons, typically avoid clearly identifiable characteristics, such as work details (other than generic professions) or school affiliations.

- Bad grammar or syntax: There is a long-held notion that semantic and grammatical flaws in posts — most notably, Russian-speakers difficulty mastering English articles — can be used to unmask Russians posing as Americans online. This is not borne out in the data. Relatively few of the IRA accounts or those on Hamilton exhibit obvious grammatical issues — at least no more than would be expected from native speakers. To be sure, there are some clunky attempts at humor, bungled idiomatic expressions, and odd name choices (Bertha Malone??), but these are the exception rather than the rule.

Twitter’s recent release of more than 3,000 known IRA troll accounts provides a full picture of the visual and biographical tactics Russian trolls use to impersonate Americans on Twitter. The following examples detail individual accounts created to attract users on the left and right of the political spectrum. Note, for privacy reasons, certain account names were redacted by Twitter.

Left Troll Examples

The following examples are recreations of suspended accounts created at free Twitter Mockup 2017. The tweets and follower suggestions are therefore not accurate.

Right Troll Examples

Operational Features: The View From Above

While individual accounts on Twitter can influence followers, they cannot fundamentally shape the information environment. That requires a network of accounts, often acting in coordination, to help drive messages on and across platforms. It is therefore necessary to take a wide-angle view of information operations that can capture large-scale, multi-pronged efforts, and illuminate the collective efforts of overt, covert, and automated accounts to manipulate the information space.

This is why Hamilton 68 was designed to monitor the aggregate output of a network of 600 Russian-linked accounts rather than the individual outputs of those accounts. At the granular level, messaging priorities are not readily apparent amidst the swirl of daily topics. At the network level, efforts to swarm critics, amplify controversies, and shape geopolitical narratives are far more obvious. But the network view of account activity also reveals efforts to manipulate the digital platforms themselves.

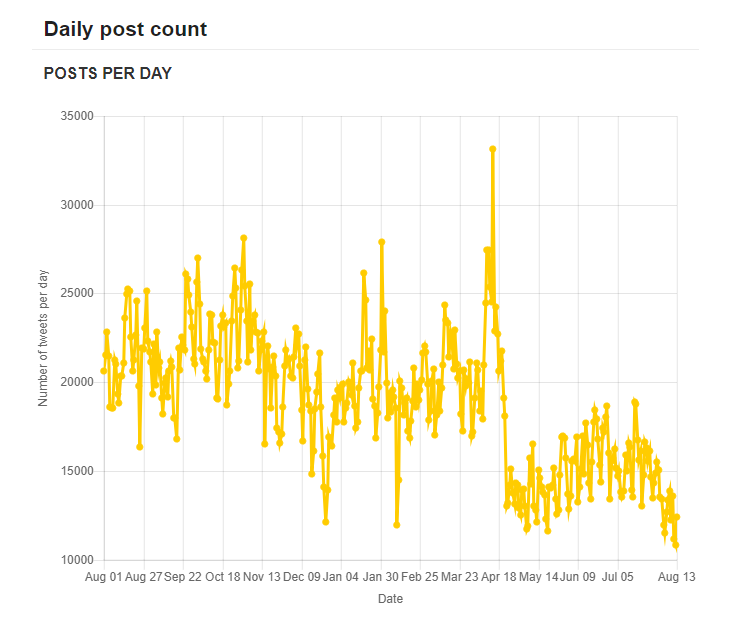

Besides charting the messages and content promoted by Russian-linked accounts, Hamilton 68 also measures the aggregate number of tweets made by monitored accounts on a daily basis. As indicated in figure 5, Russian-linked accounts have engaged in a persistent and consistent effort over the past year to influence both their American followers as well as the information environment itself.

Figure 5 – Daily activity of roughly 600 Russian-linked accounts monitored on Hamilton 68. NOTE: The decline in activity around May 2018 is due to the manual removal of bot accounts that were deemed to be no longer be relevant to Russian messaging.

Although daily fluctuations are evident, the posting habits of monitored accounts is notable more for its regularity than its variations.41 This finding highlights several critical points:

- Russian information operations are not election or event-specific: Reporting on Russian information operations has typically focused on the Kremlin’s efforts to manipulate public opinion as it relates to specific western elections or geopolitical events. This has fueled the belief that Kremlin influence campaigns are event-specific actions with clear operational objectives. While influencing elections may be a short-term strategic goal, it is but one objective in a long-term campaign to further polarize and destabilize societies and undermine faith in democratic processes and institutions. This is revealed in the consistent, workmanlike posting habits of these accounts, which suggests a more amorphous operation. This is not to say that Russian-linked accounts do not have messaging priorities. There are clear spikes in activity, which reveal an elevated interest in certain topics (this is discussed in detail later in this report). But these topics are not confined to Russia’s national interests nor western elections. As detailed in the Khusyaynova criminal complaint, the Internet Research Agency has been active from “at least 2014 to the present.”42 It is therefore critical to conceptualize Kremlin information operations as a continuous, relentless assault, rather than a series of one-off attacks.

- Computational propaganda is a volume business: In aggregate, each account monitored on Hamilton 68 averages over 30 tweets per day, with many averaging closer to 100 tweets per day.43 A wide body of psychological research has shown that repeated exposure to a message leads to greater acceptance of that message.44 Repetition (particularly from different sources) creates an illusion of validity, a phenomenon known as the “illusory truth effect.”45 This effect is ripe for social media manipulation, as the ability to retweet and repost content on and across multiple platforms can significantly influence our perception of the truth.

- Virality is key: The high-volume strategy mentioned above produces computational as well as psychological benefits. By continuously saturating Twitter with an abundance of pro-Kremlin and anti-Western narratives, Russian-linked accounts keep Kremlin-friendly narratives trending, while drowning out competing messages. In an online information ecosystem that relies on algorithms to surface content, this can determine the content audiences see online, regardless of whether they engage with Russian-linked networks or not. This is evident in the number of pro-Kremlin outlets that appear in search engine results for topics of interest to the Russian government, be it MH17 or the White Helmets.46 Those results are not accidental. The Khusyaynova criminal complaint outlined the existence of a SEO (search engine optimization) department at the IRA.47 Russian-linked accounts clearly understand that in today’s highly saturated information environment, there is an algorithmic benefit to manipulating engagement. To borrow a phrase from Renee DiResta of New Knowledge, “If you make it trend, you make it true.”48

- Influence is a long game: As previously noted, Russians create simplistic, unambiguous American personas to attract genuine users. Freshly minted accounts with low follower counts (or follower counts artificially boosted by fake or automated accounts) are not nearly as effective as those that cultivate large or influential followings. Just as authentic accounts need to build a following through consistent posting and active engagement, so to do inauthentic accounts. That takes both time and effort. In the case of spoofed local news sites (e.g., @ChicagoDailyNew), accounts merely retweeted genuine local news articles for years until they were suspended by Twitter.49 That gave them time to build both an audience and credibility, should they ever need to be operationalized in the future. If the goal is to be in a position to interfere in future events, the work needs to begin well ahead of time.

Figure 6- Chicago Daily News, an IRA created Twitter account made to spoof a genuine Chicago news outlet.

Messaging Spikes

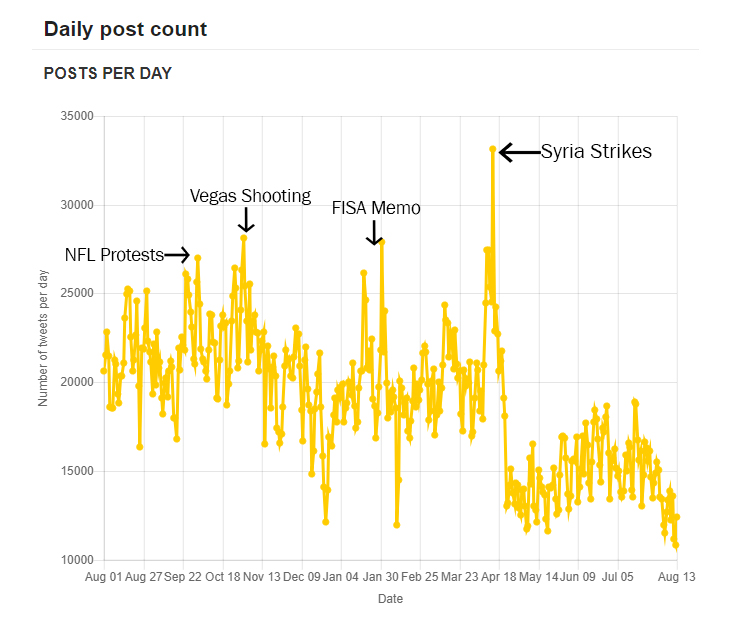

Russian-linked accounts engage in a continuous, daily campaign to influence American voters, but there are days when accounts work overtime. As indicated in Figure 7, notable surges in activity typically coincide with a singular, seminal event. These spikes are telling, as they suggest that the topic du jour is either advantageous or, conversely, detrimental to Moscow’s core objectives.

Figure 7 – Daily activity on Hamilton 68 with “spike” days labelled. Again, it is worth reiterating that the decline in May 2018 was due to the manual removal of repurposed bot accounts and is not an indication of reduced activity.

By far the most active day since the launch of the dashboard was April 14, 2018, the day the United States, U.K., and France launched airstrikes against suspected chemical weapons sites in Syria. It is the only day we recorded more than 30,000 tweets (33,139), and it represented a roughly 85 percent increase in posting compared to the prior Saturday. While messaging surges are apparent at times when Moscow’s interests are threatened, they have also been noted at times when there is a perceived opportunity to inflame a particularly issue or debate in the United States. This provides further evidence that information operations can be both offensive and defensive in nature.

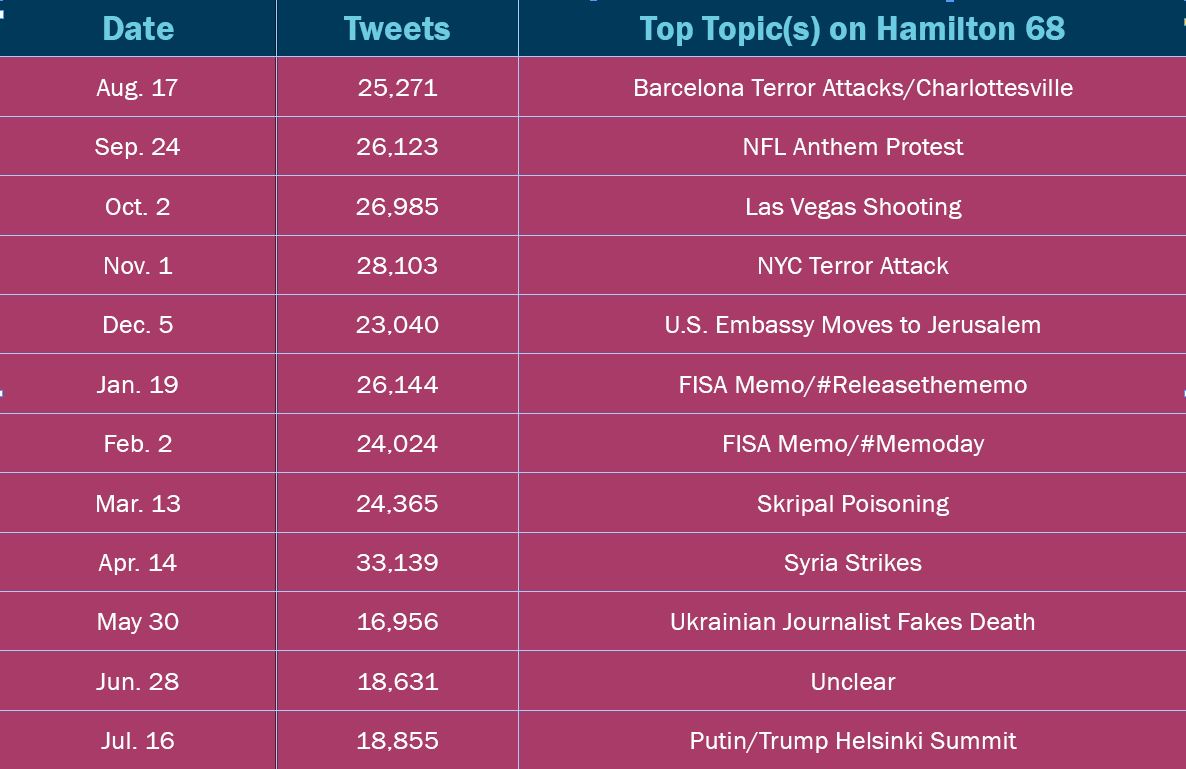

Figure 8 indicates the most active day of posting each month (from August 2017 to July 2018) by accounts monitored on Hamilton 68, along with the most salient topic or topics promoted that day. Topic salience was determined based on a review of the top topics, hashtags, and URLs captured by the dashboard. Importantly, these results only take into consideration content promoted by accounts monitored on Hamilton 68, and do not reflect the popularity of conversations across the entire Twitter platform.

Figure 8 – The most active day of posting each month by accounts monitored on Hamilton 68, along with the most salient topic on that given day.

We used a month-by-month comparison to control for the fact that the overall activity in the network has gradually declined over the course of the year due to the aforementioned manual removal of accounts, actions taken by Twitter (i.e., account suspensions and takedowns), and the natural atrophy that occurs within any network of accounts. Therefore, looking at the most active days over the year as a whole would invariably favor dates closer to the launch of the dashboard. Additionally, surges in activity often unfold over several days, making it likely that a single event — for example, the mass shooting in Las Vegas — would cause several clustered days to register as spike instances.

While it is difficult to prove a causal relationship between an increase in Twitter activity and a specific event (after all, no news story exists in a vacuum), it is possible to determine the messaging priorities of monitored accounts on the most active day of each month. As indicated in figure 6, in 10 of the 12 months there appears to be a strong correlation between a single event and an increase in Russian-linked Twitter activity. The surge in activity in the other two months could not be clearly tied to one event, although in August 2017, there were two notable events that likely contributed to the spike. In four months (March, April, May, and July 2018), a geopolitical event of interest to the Kremlin was likely responsible for the increase in tweets. In three months, a mass shooting or terror attack in the United States was the primary focus. The only single issue that registered in two separate months was the release the memo controversy; there was a spike around the original hashtag campaign (#releasethememo) and then, later, when the memo was actually released (#memoday).

In some cases, it is likely that activity on the dashboard simply mirrored broader trends; in others, Russian-linked accounts promoted content and narratives that probably were not widely popular with American audiences. This is an important distinction, but one that Hamilton data alone cannot answer.

In the following case studies, however, we attempt to add greater depth to the findings surfaced by the dashboard by examining three spike instances — the NFL anthem protests, the Las Vegas shooting, and the poisoning of Sergei and Yulia Skripal. In two of those cases — the NFL protests and the Las Vegas shooting — we performed content analysis on IRA-associated tweets provided by Clemson University researchers.50 Unfortunately, Twitter’s suspension of IRA accounts throughout the early months of 2018 makes it difficult to crosscheck results from Hamilton after December 2017. In the case of the Skripal messaging campaign, we therefore must rely on Hamilton data alone.

The NFL Anthem Protests

On September 24, 2017, the first Sunday after President Trump urged NFL owners to “fire or suspend” players for kneeling during the national anthem,51 Russian-linked accounts pounced, fanning flames on both sides of the debate as players across the league took part in pre-game demonstrations. It was a uniquely American controversy, one involving race, politics, police brutality, and patriotism — the very issues that Russians have long sought to exploit. In short, it was the perfect cocktail of wedge issues, spiked with America’s most popular and visible sport.

Russian interest in the protests was hardly new. A review of the Clemson data reveals that IRA accounts were tweeting about the protests as early as August 28, 2016 — just two days after a preseason game when San Francisco 49ers quarterback Colin Kaepernick first gained attention for refusing to stand for the national anthem. The protests remained an IRA talking point throughout 2016-2017, with #boycottnfl (145 uses)52 and Kaepernick (1,725 uses)53 seeing heavy engagement prior to September 24, 2017. BlackMattersUS.com, a faux-Black Lives Matter website created by the IRA, also posted several NFL protest articles, including an August 23, 2017 headline reading: “Police Killed at Least 223 Black Americans in the Year after Colin Kaepernick’s First Protest.”54

On September 24, though, messaging on the protests went into overdrive. Accounts monitored on Hamilton 68 sent more than 26,000 tweets — the most active day in September and one of the five most prolific days in 2017. Although accounts tweeted about a variety of topics, the top topics that day included “NFL” (2nd), “anthem” (5th), “players” (6th), and “flag” (9th). Four of the 10 most popular hashtags on Hamilton Hamilton referenced the protests, with the most popular hashtag of the day, #TakeTheKnee, used 106 times. The next day, related metrics revealed that the most linked-to URL in tweets containing the #TakeTheKnee hashtag was an article from TruthFeedNews titled “The NFL Should #TakeTheKnee for Player Violence against Women.”55 According to Ben Elgin of Bloomberg, “Truthfeed content accounted for about 95 percent of the [IRA troll] accounts’ English-language activity” by October 22, 2017.56 Clearly, a feedback loop had been established.

The Clemson University dataset contains 1,140 tweets from 27 English-language “left” and “right” IRA troll accounts on September 24, 2017. The dataset skews heavily to the right — only four tweets targeted the left, all coming from the same account (@WokeLuisa — the aforementioned account posing as an African-American activist). Additionally, 23 of the 26 right troll accounts posted the same messages at nearly identical times, a strong indication that a troll farm employee was using the now-banned practice of “Tweetdecking”57 to post from multiple accounts. This means that the majority of posts were repetitive. Of the 1,140 archived tweets, there were only 68 unique messages; more than 50 of those messages, however, were tweeted by the same 23 right troll accounts (although, oddly, some messages were tweeted by only 16 of the 23 accounts).

As covered earlier in this report, mass repetition of a message is part of the Kremlin’s dissemination strategy, particularly on Twitter. Given that each IRA account likely had different followers (though one would certainly expect some, if not significant, overlap), the audience for each tweet was different. As a result, we coded every tweet individually, regardless of its uniqueness.

Analysis of the data shows that the NFL protests were the clear focus of IRA trolls on September 24, 2017. Over 60 percent of captured tweets directly or indirectly58 referenced the protests, with #nflboycott (45 uses) and #taketheknee (6 uses) the two most used hashtags of the day. While data was obviously limited for accounts targeting the left, all four of @WokeLuisa’s posts focused on the anthem protests, including: “A so-called POTUS calls white supremacists ‘very fine people’ but athletes protesting injustice ‘sons of bitches’ #TakeTheKnee #TakeAKnee.”59 On the right, accounts struck a very different tone, with 23 different IRA trolls tweeting comments like, “Liberal Snowflakes Have a Complete MELTDOWN Over Trump’s NFL Comments.”60

Clearly, there was striking overlap between the themes promoted by IRA accounts and the themes surfaced by the dashboard; in fact, the aforementioned TruthFeedNews article that highlighted domestic abuse by NFL players (the top URL associated with the #taketheknee hashtag on Hamilton 68) was also tweeted by 23 different troll accounts on September 25, 2017. Over the next three months, the NFL protests remained a near-constant talking point, both on the dashboard and within known IRA circles.

Las Vegas Shooting

The mass shooting in Las Vegas is a prime example of an event that was of no particular importance to Russia from a policy perspective. It was, however, an offensive opportunity to pick at the scabs of America’s gun-control debate, and to spread fear-mongering messages and anti-government conspiracy theories.

On October 2, 2017, in the hours after the nighttime massacre on the Vegas strip, accounts monitored on Hamilton 68 sent nearly 27,000 tweets. Among the 10 most discussed topics were Las Vegas (1st), guns (3rd), victims (4th), shooter (7th), and shooting (9th). The focus on the Vegas shooting was hardly surprising given the national interest in the tragedy, but Russian-linked accounts primarily amplified partisan and conspiratorial narratives. Nine of the 10 most linked-to URLs featured stories attacking liberals or spreading conspiracy theories about the shooter’s motives or ideology, including “House Democrat REFUSES to Stand for a Moment of Silence for Vegas Victims”61 and “FBI Source: Vegas Shooter Found with Antifa Literature, Photos Taken in Middle East.”62

The Clemson University dataset provides tweets from 31 English-language IRA troll accounts on October 2, 2017.63 Of those accounts, only two accounts (@FIGHTTORESIST and @BLACKTOLIVE) were categorized as “left” trolls — the remainder adopted right-leaning personas. Similar to the NFL protests, 23 right-leaning accounts posted the exact same message at nearly identical times, while six right troll accounts posted unique messages. Of the tweets sent from left and right troll accounts, roughly one-third focused on the Vegas shooting. The ongoing NFL protests and attacks against the Mayor of San Juan, Puerto Rico (who criticized President Trump’s handling of Hurricane Maria recovery efforts) were the other dominant topics.

In Vegas-related tweets, the narratives largely echoed those noted on Hamilton 68 — partisan blame games, fear mongering, and conspiracy theories about the shooter’s motives or affiliations. One account, @TheFoundingSon, a right troll account that sported a banner image of a pistol and claimed to be a conservative Christian supporter of gun rights, managed to include all three narratives when “he” tweeted: “Las Vegas attack: #ISIS or liberals?”64

But the narrative also gained significant traction with accounts targeting the left. As recently as May 2018, @ kaniJJackson, a faux member of the #resistance, tweeted: “AR-15: Orlando Las Vegas Sandy Hook San Bernardino Pulse Parkland HS Waffle House Santa Fe High School #GunControlNow #Texas,”65 and “Trump has shown more outrage over Tomi Lahren having a drink thrown at her than he did over the Vegas, Parkland and Santa Fe shootings combined.”66

While the initial burst of Vegas-related tweets almost certainly mirrored a spike in general Twitter traffic, the consistent engagement by both left and right troll accounts suggests that the tragedy was viewed as a messaging opportunity. Similar engagement was also noted after the school shooting in Parkland, Florida, when Russian-linked accounts again amplified partisan and conspiratorial messages. This highlights the purely disruptive nature of Russian information operations, given that neither issue touches on Russia’s national interests.

The Skripal Affair

The poisoning of Sergei and Yulia Skripal in Salisbury, England, differs from the prior two cases in that it was an incident that directly involved the Russian state. As a result, the messaging campaign launched by the Kremlin was both more aggressive and more overt. In the initial aftermath of the poisoning, official Russian government accounts flatly denied the accusations, spread unfounded counter-accusations, and mocked the British government and media, all while crying “Russophobia.”67 Black and gray accounts amplified those narratives, while simultaneously attacking critics and inserting even wilder conspiracy theories into the mix, some of which were then regurgitated by the Russian media. The “all-hands-on-deck” approach deployed by pro-Kremlin accounts created a cacophony of competing narratives that reached a crescendo on March 13, 2018 — the day after the U.K. government named Novichok as the nerve agent used in the attack.

On Hamilton 68, seven of the top 10 URLs focused on the Skripal case, and RT and Sputnik were the two most linked-to sites, by a wide margin. The top two URLs shared by monitored accounts on March 13 were from pro-Kremlin blogs. The headlines — “The Novichok Story Is Indeed another Iraqi WMD Scam” and “Theresa Mays Novichok Claims Fall Apart” — echoed attempts by the Russian government to discredit the Skripal investigation by linking it to the U.S. and U.K.’s justification for the Iraq war. Perhaps more interesting, though, was the promotion of a 1999 New York Times article detailing U.S. efforts to clean up a chemical arms plant In Uzbekistan.68 Within hours of the U.K. naming Novichok as the nerve agent, the article began to pinball around Russian-linked accounts monitored by the dashboard. The next day, the narrative was picked up by Sputnik, which ran an article asserting that the United States “had access to the chemical used to poison Skripal since 1999.”69

The feedback loop created by various account types shows the ability of Russia’s messaging machine to flood the information space during critical moments. According to the U.K. Foreign Office, the Russian government and state-media presented 37 different narratives regarding the Skripal poisoning.70 As opposed to messages related to U.S. social and political issues, these narratives were, by and large, created by the Russian government, media, or overt sympathizers. When Russia’s interests are at stake, Russian-linked accounts shift to not only overtly pro-Kremlin messages but also pro-Kremlin messengers.

Measuring Impact

Hamilton 68 does not measure the spread of content across the entire Twitter platform. It would therefore be impossible to claim, based on Hamilton data alone, that Russian-linked accounts are “driving the conversation” on a particular topic. Even if we could compare Russian-linked networks monitored on Hamilton to other networks, measuring influence based solely on engagement metrics would be highly flawed. Therefore, we use a few key indicators to determine when Russian-linked efforts might influence a debate:

Intensity of engagement — The overall volume of tweets, as measured against typical patterns of behavior. This metric looks at both the aggregate output of monitored networks as well as engagements with particular hashtags, stories, or topics in order to identify peaks or spikes in activity. As detailed earlier, these spikes are telling as they suggest that a news story or event is of particular interest or concern to Moscow.

Focus of engagement — The percentage of tweets dedicated to a specific topic during a specific period of time. If we consider tweets to be a finite resource, this measures the investment of resources into a particular narrative.

Longevity of engagement — The length of time a topic or narrative is actively promoted. This temporal analysis assesses efforts to keep a topic relevant and trending. This must be measured against the wider salience of a topic in the ever-shifting news cycle. An ongoing issue will clearly receive some measure of ongoing coverage. Therefore, this indicator looks at issues that continue to be promoted after wider interest has faded.

Narrative Influence — The introduction or amplification of new narratives or narratives not widely shared in organic, American networks. This is somewhat subjective, but there is a clear difference in cases when Russian-linked accounts are bandwagon followers rather than the drivers of particular narratives.

The three case studies examined in this report show signs of intensity, focus, and longevity of engagement (although the latter metric is not covered in detail). However, narrative influence seems to be far more common when accounts engage in geopolitical debates. The Skripal campaign highlights an active effort to manipulate global consensus through the insertion of multiple counter-narratives to distract from western messages and discredit western messengers. The promotion of Kremlin controlled domains also indicates that when Russia’s strategic goals are at stake, Russia turns to its own messaging apparatus to shape the debate.

In the case of the anthem protests and Vegas shooting, on the other hand, Russian-linked accounts merely amplified the most divisive American voices and narratives. That is not to dismiss the Kremlin’s role in further exacerbating America’s preexisting societal conditions. Clearly, a steady drumbeat of negative, partisan, and conspiratorial rhetoric is not beneficial to public discourse. But it is also safe to assume that plenty of authentic American accounts would engage in hyper-partisan, toxic behavior, regardless of Russian influence.

At the same time, it is now clear that the Russian-linked information operations on social media reached a significant number of real Americans. It is impossible to dismiss the possibility that at least some of those Americans were influenced by online campaigns, often under false pretenses. It is therefore essential that we continue to study the threat, as the tactics and techniques used on social media will likely evolve as quickly as the technology itself.

- Internet Research Agency tweets captured and published by Darren Linvill and Patrick Warren of Clemson University and published by Oliver Roeder, “Why We’re Sharing 3 Million Russian Troll Tweets,” GitHub, July 31, 2018, https://fivethirtyeight.com/features/why-were-sharing-3-million-russian-troll-tweets/.

- @TEN_GOP, Twitter, August 14, 2017, retrieved from Internet Archive website: https://web.archive.org/web/20170814211128/https://twitter.com/ten_gop.

- @TEN_GOP, “McMaster fires another Trump loyalist Ezra Cohen-Watnick from the National Security Council. RETWEET if you think McMaster needs to go! https://t.co/TXDbbQCxM6,” Twitter, August 2, 2017, retrieved from Russiatweets.com, https://russiatweets.com/tweet/2608703.

- “Facebook, Google, and Twitter Executives on Russia Election Interference,” C-SPAN, November 1, 2017, https://www.c-span.org/video/?436360-1/facebook-google-twitter-executives-testify-russias-influence-2016-election.

- Robert S. Mueller, III, United States of America v. Viktor Borisovich Netyksho, Boris Alekseyevich Antonov, Dmitriy Sergeyevich Badin, Ivan Sergeyevich Yermakov, Aleksey Viktorovich Lukashev, Sergey Aleksandrovich Morgachev, Nikolay Yuryevich Kozachek, Pavel Vyacheslavovich Yershov, Artem Andreyevich Malyshev, Aleksandr Vladimirovich Osadchuk, Aleksey Aleksandrovich Potemkin, and Anatoliy Sergeyevich Kovalev, No. 1:18-cr-00215-ABJ (United States District Court for the District of Columbia July 13, 2018), https://www.justice.gov/file/1035477/download.

- “Assessing Russian Activities and Intentions in Recent US Elections,” Office of the Director of National Intelligence, January 6, 2017, https://www.dni.gov/files/documents/ICA_2017_01.pdf.

- Hamilton 68 is a collaboration between the Alliance of Securing Democracy and four outside researchers: Clint Watts, J.M. Berger, Andrew Weisburd, and Jonathon Morgan. https://dashboard.securingdemocracy.org/.

- J.M. Berger, “The Methodology of the Hamilton 68 Dashboard,” Alliance for Securing Democracy, August 7, 2017, https://securingdemocracy.gmfus.org/the-methodology-of-the-hamilton-68-dashboard/.

- Our efforts appeared to pay early dividends: Peter Baker cited the dashboard in an August 4, 2017 New York Times article highlighting Russian attempts to amplify the #FireMcMaster hashtag campaign. See Peter Baker, “Trump Defends McMaster against Calls for His Firing,” The New York Times, August 4, 2017, https://www.nytimes.com/2017/08/04/us/politics/trump-mcmaster-national-security-conservatives.html.

- Jean-Baptiste Jeangène Vilmer, Alexandre Escorcia, Marine Guillaume, Janaina Herrera, “Information Manipulation: A Challenge for Democracies,” A joint report by the Policy Planning Staff, Ministry for Europe and Foreign Affairs and the Institute for Strategic Research, Ministry for the Armed Forces, August, 2018, https://www.diplomatie.gouv.fr/IMG/pdf/information_manipulation_rvb_cle838736.pdf.

- “How to Interpret the Hamilton 68 Dashboard,” Alliance for Securing Democracy, https://securingdemocracy.gmfus.org/toolbox/how-to-interpret-the-hamilton-68-dashboard-key-points-and-clarifications/.

- Data archive provided by Twitter, October 17, 2018, https://about.twitter.com/en_us/values/elections-integrity.html#data.

- Daniel Holt, United States of America v. Elena Alekseevna Khusyaynova, No. 1:18-MJ-464 (United States District Court, Alexandria, Virginia, September 28, 2018), https://www.justice.gov/usao-edva/press-release/file/1102591/download.

- Clint Watts, “Russia’s Active Measures Architecture: Task and Purpose,” Alliance for Securing Democracy, May 22, 2018, https://securingdemocracy.gmfus.org/russias-active-measures-architecture-task-and-purpose/.

- Data archive provided by Twitter, October 17, 2018, https://about.twitter.com/en_us/values/elections-integrity.html#data.

- Bret Schafer and Sophie Eisentraut, “Russian Infowar Targets Transatlantic Bonds,” The Cipher Brief, March 30, 2018, https://www.thecipherbrief.com/russian-infowar-targets-transatlantic-bonds.

- Christopher Paul and Miriam Matthews, “The Russian ‘Firehose of Falsehood’ Propaganda Model,” RAND Corporation, 2016, https://www.rand.org/pubs/perspectives/PE198.html.

- See, for example, Max Boot, “Russia has Invented Social Media Blitzkrieg,” Foreign Policy, October 13, 2017, https://foreignpolicy.com/2017/10/13/russia-has-invented-social-media-blitzkrieg/.

- Daniel Holt, United States of America v. Elena Alekseevna Khusyaynova, No. 1:18-MJ-464 (United States District Court, Alexandria, Virginia, September 28, 2018), https://www.justice.gov/usao-edva/press-release/file/1102591/download.

- Denise Clifton, “Putin’s Trolls Are Targeting Trump’s GOP Critics—Especially John McCain,” Mother Jones, January 12, 2018, https://www.motherjones.com/politics/2018/01/putins-trolls-keep-targeting-john-mccain-and-other-gop-trump-critics/.

- “As the Trump Dossier Scandal Grows and Implicates Him, McCain checks into Hospital,” True Pundit, December 13, 2017, https://truepundit.com/as-trump-dossier-scandal-grows-and-implicates-him-mccain-checks-into-hospital/.

- Daniel Holt, United States of America v. Elena Alekseevna Khusyaynova, No. 1:18-MJ-464 (United States District Court, Alexandria, Virginia, September 28, 2018), https://www.justice.gov/usao-edva/press-release/file/1102591/download.

- Saranac Hale Spencer, “More Claims of Alabama Voter Fraud,” Factcheck.org, December 19, 2017, https://www.factcheck.org/2017/12/claims-alabama-voter-fraud/.

- @fighttoresist, “FBI chief: Trump hasn’t directed me to stop Russian meddling in midterms We know why Trump doesn’t want to act against Russia.” Twitter, February 13, 2018, https://russiatweets.com/tweet/1076851.

- Brad Heath, “Nunes Memo Release: What you need to know about the controversial document,” USA Today, February 4, 2018, https://www.usatoday.com/story/news/politics/2018/02/02/fisa-surveillance-nunes-memo-allegations/300583002/.

- @barabarafortrump, Twitter, January 29, 2018, retrieved from Russiatweets.com, https://russiatweets.com/tweet/272211.

- @wokeluisa, Twitter, February 2, 2018, retrieved from Russiatweets.com, https://russiatweets.com/tweet/2870340.

- Daniel Holt, United States of America v. Elena Alekseevna Khusyaynova, No. 1:18-MJ-464 (United States District Court, Alexandria, Virginia, September 28, 2018), https://www.justice.gov/usao-edva/press-release/file/1102591/download.

- World traffic ranking retrieved on October 25, 2016 from Similarweb.com, https://www.similarweb.com/website/stalkerzone.org and https://www.similarweb.com/website/dninews.com.

- Tom O’Connor, “Syria at War: As U.S. Bombs Rebels, Russia Strikes ISIS and Israel Targets Assad,” Newsweek, March 17, 2017, https://www.newsweek.com/syria-war-us-rebels-russia-isis-israel-569812.

- @wokeluisa, Twitter, April 7, 2017, retrieved from Russiatweets.com, https://russiatweets.com/tweet/2871172.

- @wokeluisa, Twitter, April 7, 2017, retrieved from Russiatweets.com, https://russiatweets.com/tweet/2871172.

- “Soviet Influence Activities: A Report on Active Measures and Propaganda, 1986 – 87,” United States Department of State, August 1987, retrieved from https://www.globalsecurity.org/intell/library/reports/1987/soviet-influence-activities-1987.pdf.

- Alvin A. Snyder, Warriors of Disinformation: How Lies, Videotape, and USIA Won the Cold War, New York: Arcade Publishing, 1995.

- “Deadly Experiments: Georgian Ex-Minister Claims US-funded Facility may be Bioweapons Lab,” RT.com, September 16, 2018, https://www.rt.com/news/438543-georgia-us-laboratory-bio-weapons/

- On September 13, the blog post was among the top 10 most linked-to URL on Hamilton 68. Dilyana Gaytandzhieva, “U.S. Diplomats Involved in Trafficking of Human Blood and Pathogens for Secret Military Program,” Dilyana.Bg, September 12, http://dilyana.bg/us-diplomats-involved-in-trafficking-of-human-blood-and-pathogens-for-secret-military-program/

- Andrew Higgins, “Maybe Private Russian Hackers Meddled in Election, Putin Says,” The New York Times, June 1, 2017, https://www.nytimes.com/2017/06/01/world/europe/vladimir-putin-donald-trump-hacking.html.

- Tim Mak, “Russian Influence Campaign Sought to Exploit Americans’ Trust in Local News,” NPR, July 12, 2018, https://www.npr.org/2018/07/12/628085238/russian-influence-campaign-sought-to-exploit-americans-trust-in-local-news.

- Darren Linvill and Patrick Warren, “Troll Factories: The Internet Research Agency and State-Sponsored Agenda Building,” The Social Media Listening Center, Clemson University, undated working paper, http://pwarren.people.clemson.edu/Linvill_Warren_TrollFactory.pdf.

- Data archive provided by Twitter, October 17, 2018, https://about.twitter.com/en_us/values/elections-integrity.html#data.

- Of note, the significant decline in activity after May 2018 is not an indication of reduced activity by monitored accounts or of more rigorous enforcement by Twitter — it is the result of the removal of several accounts (mainly automated accounts) after a manual review determined that they had been repurposed and were therefore no longer relevant.

- Daniel Holt, United States of America v. Elena Alekseevna Khusyaynova, No. 1:18-MJ-464 (United States District Court, Alexandria, Virginia, September 28, 2018), https://www.justice.gov/usao-edva/press-release/file/1102591/download.

- The average number of daily tweets varies drastically among accounts. Accounts likely operated by professional trolls or that use automation can average over 100 tweets per day.

- This phenomenon was first detailed in 1977 study conducted by Lynn Hasher, David Goldstein, and Thomas Toppino, “Frequency and the conference of referential validity,” Journal of Vernal Learning and Verbal Behavior, 16 (1): 107–112, 1977.

- Daniel Kahneman, Thinking, Fast and Slow, New York: Farrar, Straus and Giroux, 2011.

- Bradley Hanlon, “From Nord Stream to Novichok: Kremlin Propaganda on Google’s Front Page,” June 14, 2018, https://securingdemocracy.gmfus.org/from-nord-stream-to-novichok-kremlin-propaganda-on-googles-front-page/.

- Daniel Holt, United States of America v. Elena Alekseevna Khusyaynova, No. 1:18-MJ-464 (United States District Court, Alexandria, Virginia, September 28, 2018), https://www.justice.gov/usao-edva/press-release/file/1102591/download.

- Renee DiResta, “Computational Propaganda: If you make it trend, you make it true,” The Yale Review, October 12, 2018, https://yalereview.yale.edu/computational-propaganda.

- Tim Mak, “Russian Influence Campaign Sought to Exploit Americans’ Trust in Local News,” NPR, July 12, 2018, https://www.npr.org/2018/07/12/628085238/russian-influence-campaign-sought-to-exploit-americans-trust-in-local-news.

- The Clemson University data was chosen for analysis (rather than the full IRA data provided by Twitter) due to its searchability.

- Abby Phillips and Cindy Boren, “Players, Owners Unite as Trump Demands NFL ‘Fire or Suspend’ Players or Risk Fan Boycott, The Washington Post, September 24, 2017, https://www.washingtonpost.com/news/post-politics/wp/2017/09/24/trump-demands-nfl-teams-fire-or-suspend-players-or-risk-fan-boycott/?utm_term=.1ee4f6431211.

- Data retrieved from Russiatweets.com, October 23, 2017, https://russiatweets.com/tweet-search?terms=%23boycottnfl&language=®ion=&start_date=&end_date=2017-09-24&orderby=&author=.

- Data retrieved from Russiatweets.com, October 23, 2017, https://russiatweets.com/tweet-search?terms=kaepernick&language=®ion=&start_date=&end_date=2017-09-24&orderby=&author=.

- Ken Patterson, “Police Killed At Least 223 Black Americans In The Year After Colin Kaepernick’s First Protest,” BlackMatterUS.com, August 23, 2017, https://blackmattersus.com/36345-police-killed-at-least-223-black-americans-in-the-year-after-colin-kaepernicks-first-protest/.

- Amy Moreno, “The NFL should #TakeTheKnee for Player Violence against Women,” TruthFeedNews.com, September 25, 2017, https://truthfeednews.com/the-nfl-should-taketheknee-for-player-violence-against-women/.

- Ben Elgin, “Russian Trolls Amped Up Tweets for Pro-Trump Website’s Content,” Bloomberg, August 15, 2018, https://www.bloomberg.com/news/articles/2018-08-15/russian-trolls-amped-up-tweets-for-pro-trump-website-s-content.

- Julia Reinstein, “Twitter Is Trying To Kill ‘Tweetdecking.’ Here’s What You Should Know.” BuzzFeed, February 21, 2018, https://www.buzzfeednews.com/article/juliareinstein/twitter-is-making-changes-to-try-and-kill-the-tweetdeckers.

- Tweets that mentioned other professional sports (MLB and NBA) protests were coded as part of the broader NFL debate.

- @WokeLuisa, “A so-called POTUS calls white supremacists ‘very fine people’ but athletes protesting injustice ‘sons of bitches’ #TakeTheKnee #TakeAKnee,” Twitter, September 24, 2017, retrieved from Russiatweets.com

- @Caadeenrrs et. all, “Liberal Snowflakes Have a Complete MELTDOWN Over Trump’s NFL Comments,” Twitter, September, 24, 2017, retrieved from Russiatweets.com

- Eren Moreno, “House Democrat REFUSES to Stand for a Moment of Silence for Vegas Victims,” TruthFeedNews, October 2, 2017, https://truthfeednews.com/house-democrat-refuses-to-stand-for-a-moment-of-silence-for-vegas-victims/.

- “FBI Source: Vegas Shooter Found with Antifa Literature, Photos Taken in Middle East,” InfoWars, October 2, 2017, https://www.infowars.com/fbi-source-vegas-shooter-found-with-antifa-literature-photos-taken-in-middle-east/

- This number does not include accounts that were categorized as “newsfeed” accounts or 666STEVEROGERS, an account whose posts were not relevant.

- @TheFoundingSon, “Las Vegas attack: #ISIS or liberals?” Twitter, October 2, 2017, retrieved from Russiatweets.com, https://russiatweets.com/tweet/2615398.

- @KaniJJackson, “AR-15: Orlando Las Vegas Sandy Hook San Bernardino Pulse Parkland HS Waffle House Santa Fe High School #GunControlNow #Texas,” Twitter, May 18, 2018, retrieved from Russiatweets.com, https://russiatweets.com/tweet/1344479

- @KaniJJackson, “Trump has shown more outrage over Tomi Lahren having a drink thrown at her than he did over the Vegas, Parkland and Santa Fe shootings combined.” Twitter, May 23, 2018, retrieved from Russiatweets.com, https://russiatweets.com/tweet/1495476.

- For a recap of the Russian government response, see, for example, Alexey Kovalev, “Who, us? Russia is Gaslighting the World on the Skripal Poisonings,” The Guardian, May 25, 2018, https://www.theguardian.com/commentisfree/2018/may/25/russia-skripal-poisoning-state-television-russian-embassy.

- Judith Miller, “U.S. and Uzbeks Agree on Chemical Arms Plant Cleanup,” The New York Times, May 25, 1999, https://www.nytimes.com/1999/05/25/world/us-and-uzbeks-agree-on-chemical-arms-plant-cleanup.html.

- “US Had Access to Substance Allegedly Used to Poison Skripal Since 1999 – Report,” Sputniknews.com, March 14, 2018, https://sputniknews.com/world/201803141062510743-skripal-case-novichok-us-uzbekistan/.

- Nathan Hodge and Laura Smith-Spark, “Russians accused over Salisbury poisoning were in city ‘as tourists,’” CNN, September 14, 2018, https://www.cnn.com/2018/09/13/europe/russia-uk-skripal-poisoning-suspects-intl/index.html.